For more details on the underlying data, see Sample database. The following examples use the sample database (TICKIT) provided by AWS. To authenticate to Redshift using IAM Authentication or Okta, see the Apache Airflow documentation. If your Airflow instance is running in the same AWS VPC as your Redshift cluster, you may have other authentication options available. Permission to interact with the Redshift cluster, specifically:.Read/write permissions for a preconfigured S3 Bucket.Give aws_default the following permissions on AWS:.Allow inbound traffic from the IP Address where Airflow is running.Use the following parameters for your new connection (all other fields can be left blank):Ĭonfigure the following in your Redshift cluster: If you use a name other than redshift_default for this connection, you'll need to specify it in the modules that require a Redshift connection.

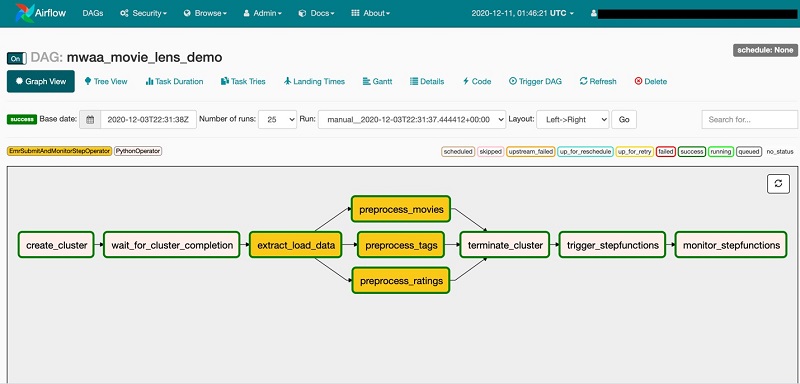

Redshift_default: The default connection that Airflow Redshift modules use. In the Airflow UI, go to Admin > Connections and add the following connections: The most common way of doing this is by configuring an Airflow connection. Otherwise, run pip install apache-airflow-providers-amazon.Ĭonnect your Airflow instance to Redshift. If you are working with the Astro CLI, add apache-airflow-providers-amazon to the requirements.txt file of your Astro project. To use Redshift operators in Airflow, you first need to install the Redshift provider package and create a connection to your Redshift cluster. See Managing your Connections in Apache Airflow. Airflow fundamentals, such as writing DAGs and defining tasks.To get the most out of this tutorial, make sure you have an understanding of: You'll also complete sample implementations that execute SQL in a Redshift cluster, pause and resume a Redshift cluster, and transfer data between Amazon S3 and a Redshift cluster.Īll code in this tutorial is located in the GitHub repo. In this tutorial, you'll learn about the Redshift modules that are available in the AWS Airflow provider package. With Airflow, you can orchestrate each step of your Redshift pipeline, integrate with services that clean your data, and store and publish your results using SQL and Python code. This makes Airflow the perfect orchestrator to pair with Redshift. It has become the most popular cloud data warehouse in part because of its ability to analyze exabytes of data and run complex analytical queries.ĭeveloping a dimensional data mart in Redshift requires automation and orchestration for repeated queries, data quality checks, and overall cluster operations. Orchestrate Redshift operations with AirflowĪmazon Redshift is a fully-managed cloud data warehouse.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed